- Fairness in Machine Learning — read this fabulous presentation. Most ML objective functions create models accurate for the majority class at the expense of the protected class. One way to encode “fairness” might be to require similar/equal error rates for protected classes as for the majority population.

- Safety Constraints and Ethical Principles in Collective Decision-Making Systems (PDF) — self-driving cars are an example of collective decision-making between intelligent agents and, possibly, humans. Damn it’s hard to find non-paywalled research in this area. This is horrifying for the list of things you can’t ensure in collective decision-making systems.

- State of Hardware Report (Nate Evans) — rundown of the stats related to hardware startups. (via Renee DiResta)

- A Recent Discussion about DRM (Joi Ito) — strong arguments against including Digital Rights Management in W3C’s web standards (I can’t believe we’re still debating this; it’s such a self-evidently terrible idea to bake disempowerment into web standards).

"standards" entries

Four short links: 7 April 2016

Fairness in Machine Learning, Ethical Decision-Making, State of Hardware, and Against Web DRM

“Internet of Things” is a temporary term

The O'Reilly Radar Podcast: Pilgrim Beart on the scale, challenges, and opportunities of the IoT.

Subscribe to the O’Reilly Radar Podcast to track the technologies and people that will shape our world in the years to come.

In this week’s Radar Podcast, O’Reilly’s Mary Treseler chatted with Pilgrim Beart about co-founding his company, AlertMe, and about why the scale of the Internet of Things creates as many challenges as it does opportunities. He also talked about the “gnarly problems” emerging from consumer wants and behaviors.

How we got to the HTTP/2 and HPACK RFCs

A brief history of SPDY and HTTP/2.

Download HTTP/2.

SPDY was an experimental protocol, developed at Google and announced in mid-2009, whose primary goal was to try to reduce the load latency of web pages by addressing some of the well-known performance limitations of HTTP/1.1. Specifically, the outlined project goals were set as follows:

- Target a 50% reduction in page load time (PLT).

- Avoid the need for any changes to content by website authors.

- Minimize deployment complexity, avoid changes in network infrastructure.

- Develop this new protocol in partnership with the open-source community.

- Gather real performance data to (in)validate the experimental protocol.

To achieve the 50% PLT improvement, SPDY aimed to make more efficient use of the underlying TCP connection by introducing a new binary framing layer to enable request and response multiplexing, prioritization, and header compression.

Not long after the initial announcement, Mike Belshe and Roberto Peon, both software engineers at Google, shared their first results, documentation, and source code for the experimental implementation of the new SPDY protocol:

So far we have only tested SPDY in lab conditions. The initial results are very encouraging: when we download the top 25 websites over simulated home network connections, we see a significant improvement in performance—pages loaded up to 55% faster.

— A 2x Faster Web Chromium Blog

Fast-forward to 2012 and the new experimental protocol was supported in Chrome, Firefox, and Opera, and a rapidly growing number of sites, both large (e.g. Google, Twitter, Facebook) and small, were deploying SPDY within their infrastructure. In effect, SPDY was on track to become a de facto standard through growing industry adoption.

Four short links: 23 March 2015

Agricultural Robots, Business Model Design, Simulations, and Interoperable JSON

- Swarmfarm Robotics — His previous weed sprayer weighed 21 tonnes, measured 36 metres across its spray unit, guzzled diesel by the bucketload and needed a paid driver who would only work limited hours. Two robots working together on Bendee effortlessly sprayed weeds in a 70ha mung-bean crop last month. Their infra-red beams picked up any small weeds among the crop rows and sent a message to the nozzle to eject a small chemical spray. Bate hopes to soon use microwave or laser technology to kill the weeds. Best of all, the robots do the work without guidance. They work 24 hours a day. They have in-built navigation and obstacle detection, making them robust and able to decide if an area of a paddock should not be traversed. Special swarming technology means the robots can detect each other and know which part of the paddock has already been assessed and sprayed.

- Route to Market (Matt Webb) — The route to market is not what makes the product good. […] So the way you design the product to best take it to market is not the same process to make it great for its users.

- Explorable Explanations — points to many sweet examples of interactive explorable simulations/explanations.

- I-JSON (Tim Bray) — I-JSON is just a note saying that if you construct a chunk of JSON and avoid the interop failures described in RFC 7159, you can call it an “I-JSON Message.” If any known JSON implementation creates an I-JSON message and sends it to any other known JSON implementation, the chance of software surprises is vanishingly small.

The complexity of the IoT requires experience design solutions

Claire Rowland on interoperability, networks, and latency.

Register to attend our new online conference (May 20, 2015) to hear Claire Rowland and other design leaders discuss experience design and the Internet of Things.

The Internet of Things (IoT) is challenging designers to rethink their craft. I recently sat down with Claire Rowland, independent designer and author of the forthcoming book Designing Connected Products to talk about the changing design landscape.

During our interview, Rowland brought up three points that resonated with me.

Interoperability and the Internet of Things

This is an IoT issue that affects everyone — engineers, designers, and consumers alike. Rowland recalled a fitting quote she’d once heard to describe the standards landscape: “Standards are like toothbrushes, everyone knows you need one, but nobody wants to use anybody else’s.”

Designers, like everyone else involved with the Internet of Things, will need equal amounts of patience and agility as the standards issue works itself out. Rowland explains interoperability:

“Interoperability is about devices and applications and services being able to interact with other devices, applications, and services regardless of the hardware architecture or who made them or what kind of software they run. Basically, IoT — that means, the devices and services for which we may connect our applications — can discover and communicate and coordinate with other devices and services, no matter who made them.

“It also means that if you want to substitute, if you have a fitness tracker from one manufacturer and you want to replace it with a fitness tracker from another manufacturer, in an ideal world, you would be able to say, I’ve changed, but I haven’t lost my data. That is a fairly seamless experience.”

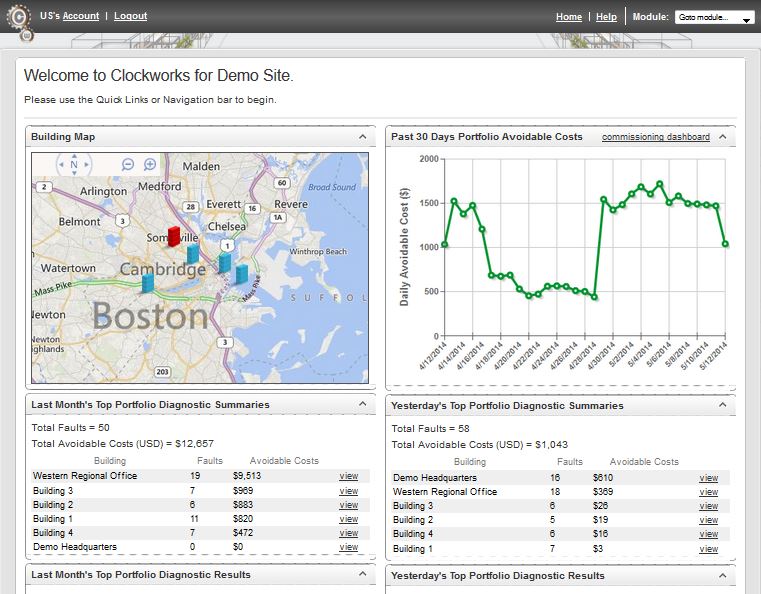

Smarter buildings through data tracking

Buildings are ready to be smart — we just need to collect and monitor the data.

Buildings, like people, can benefit from lessons built up over time. Just as Amazon.com recommends books based on purchasing patterns or doctors recommend behavior change based on what they’ve learned by tracking thousands of people, a service such as Clockworks from KGS Buildings can figure out that a boiler is about to fail based on patterns built up through decades of data.

I had the chance to be enlightened about intelligent buildings through a conversation with Nicholas Gayeski, cofounder of KGS Buildings, and Mark Pacelle, an engineer with experience in building controls who has written for O’Reilly about the Internet of Things. Read more…

8 key attributes of Bluetooth networking

Bluetooth networking within the Internet of Things

This article is part of a series exploring the role of networking in the Internet of Things.

Previously, we set out to choose the wireless technology standard that best fits the needs of our hypothetical building monitoring and energy application. Going forward, we will look at candidate technologies within all three networking topologies discussed earlier: point-to-point, star, and mesh. We’ll start with Bluetooth, the focus of this post.

Previously, we set out to choose the wireless technology standard that best fits the needs of our hypothetical building monitoring and energy application. Going forward, we will look at candidate technologies within all three networking topologies discussed earlier: point-to-point, star, and mesh. We’ll start with Bluetooth, the focus of this post.

Bluetooth is the most common wireless point-to-point networking standard, designed for exchanging data over short distances. It was developed to replace the cables connecting portable and/or fixed devices.

Today, Bluetooth is well suited for relatively simple applications where two devices need to connect with minimal configuration setup, like a button press, as in a cell phone headset. The technology is used to transfer information between two devices that are near each other in low-bandwidth situations such as with tablets, media players, robotics systems, handheld and console gaming equipment, and some high-definition headsets, modems, and watches.

When considering Bluetooth for use in our building application, we must consider the capabilities of the technology and compare these capabilities to the nine application attributes outlined in my previous post. Let’s take a closer look at Bluetooth across these eight key attributes.

Health IT is a growth area for programmers

New report covers areas of innovation and their difficulties

O’Reilly recently released a report I wrote called The Information Technology Fix for Health: Barriers and Pathways to the Use of Information Technology for Better Health Care. Along with our book Hacking Healthcare, I hope this report helps programmers who are curious about Health IT see what they need to learn and what they in turn can contribute to the field.

O’Reilly recently released a report I wrote called The Information Technology Fix for Health: Barriers and Pathways to the Use of Information Technology for Better Health Care. Along with our book Hacking Healthcare, I hope this report helps programmers who are curious about Health IT see what they need to learn and what they in turn can contribute to the field.

Computers in health are a potentially lucrative domain, to be sure, given a health care system through which $2.8 trillion, or $8.915 per person, passes through each year in the US alone. Interest by venture capitalists ebbs and flows, but the impetus to creative technological hacking is strong, as shown by the large number of challenges run by governments, pharmaceutical companies, insurers, and others.

Some things you should consider doing include:

- Join open source projects

- Numerous projects to collect and process health data are being conducted as free software; find one that raises your heartbeat and contribute. For instance, the most respected health care system in the country, VistA from the Department of Veterans Affairs, has new leadership in OSEHRA, which is trying to create a community of vendors and volunteers. You don’t need to understand the oddities of the MUMPS language on which VistA is based to contribute, although I believe some knowledge of the underlying database would be useful. But there are plenty of other projects too, such as the OpenMRS electronic record system and the projects that cooperate under the aegis of Open Health Tools.

Business models that make the Internet of Things feasible

The bid for widespread home use may drive technical improvements.

For some people, it’s too early to plan mass consumerization of the Internet of Things. Developers are contentedly tinkering with Arduinos and clip cables, demonstrating cool one-off applications. We know that home automation can save energy, keep the elderly and disabled independent, and make life better for a lot of people. But no one seems sure how to realize this goal, outside of security systems and a few high-end items for luxury markets (like the Nest devices, now being integrated into Google’s grand plan).

But what if the willful creation of a mass consumer market could make the technology even better? Perhaps the Internet of Things needs a consumer focus to achieve its potential. This view was illuminated for me through a couple recent talks with Mike Harris, CEO of the home automation software platform Zonoff.

3 topologies driving IoT networking standards

The importance of network architecture on the Internet of Things

This article is part of a series exploring the role of networking in the Internet of Things.

There are a lot of moving parts in the networking for the Internet of Things; a lot to sort out between WiFi, WiFi LP, Bluetooth, Bluetooth LE, Zigbee, Z-Wave, EnOcean and others. Some standards are governed by open, independent standards bodies, while others are developed by a single company and are being positioned as defacto standards. Some are well established, others are in the early adoption stage. All were initially developed to meet unique application-specific requirements such as range, power consumption, bandwidth, and scalability. Although these are familiar issues, they take on a new urgency in IoT networks.

To begin establishing the right networking technology for your application, it is important to first understand the network architecture, or the network topology, that is supported by each technology standard. The networking standards being used today in IoT can be categorized into three basic network topologies; point-to-point, star, and mesh. Read more…