"Java" entries

The cloud-native future

Moving beyond ad-hoc automation to take advantage of patterns that deliver predictable capabilities.

Can you release new features to your customers every week? Every day? Every hour? Do new developers deploy code on their first day, or even during job interviews? Can you sleep soundly after a new hire’s deployment knowing your applications are all running perfectly fine? A rapid release cadence with the processes, tools, and culture that support the safe and reliable operation of cloud-native applications has become the key strategic factor for software-driven organizations who are shipping software faster with reduced risk. When you are able to release software more rapidly, you get a tighter feedback loop that allows you to respond more effectively to the needs of customers.

Continuous delivery is why software is becoming cloud-native: shipping software faster to reduce the time of your feedback loop. DevOps is how we approach the cultural and technical changes required to fully implement a cloud-native strategy. Microservices is the software architecture pattern used most successfully to expand your development and delivery operations and avoid slow, risky, monolithic deployment strategies. It’s difficult to succeed, for example, with a microservices strategy when you haven’t established a “fail fast” and “automate first” DevOps culture.

Continuous delivery, DevOps, and microservices describe the why, how, and what of being cloud-native. These competitive advantages are quickly becoming the ante to play the software game. In the most advanced expression of these concepts they are intertwined to the point of being inseparable. This is what it means to be cloud-native.

How reactive applications adapt

The principles of reactive applications facilitate adaptation.

One of the fascinating things found in nature is the ability of a species to adapt to its changing environment. The canonical example of this is Britain’s Peppered Moth. When newly industrialized Great Britain became polluted in the nineteenth century, slow-growing, light-colored lichens that covered trees died and resulted in a blackening of the trees bark. The impact of this was quite profound: lightly-colored peppered moths, which historically had been well camouflaged and the majority, now found themselves the obvious target of many a hungry bird. Their rare, dark-colored sibling, who had been conspicuous before, now blended into their recently polluted ecosystem. As the birds, changed from eating dark-colored to light-colored moths, the previously common light-colored moth became the minority, and the dynamics of Britain’s moth population changed.

So what do moths have to do with programming? Read more…

Learn a C-style language

Improve your odds with the lingua franca of computing.

You have a lot of choices when you’re picking a programming language to learn. If you look around the web development world, you’ll see a lot of JavaScript. At universities and high schools, you’ll often find Python used as a teaching language. If you go to conferences with language theorists, like Strange Loop, you’ll hear a lot about functional languages, such as Haskell, Scala, and Erlang. This level of choice is good: many languages mean that the overall state of the field is continually evolving, and coming up with new solutions. That choice also leads to a certain amount of confusion regarding what you should learn. It’s not possible to learn every language out there, even if you wanted to. Depending on the area you’re in, the choice of language may be made for you. For the overall health of your career, and to provide you the widest range of future opportunities, the single most useful language-related thing you can do is learn a C-style language.

A boring old C-style language just like millions of developers learned before you, going back to the 1980s and earlier. It’s not flashy, it’s usually not cutting edge, but it is smart. Even if you don’t stick with it, or program in it on a daily basis, having a C-style language in your repertoire is a no-brainer if you want to be taken seriously as a developer.

Read more…

Battery performance in Android M

Exploring the new Android M battery performance features.

It has been a long held personal belief that most battery drain issues on smartphone devices are due to applications that are improperly tuned. I work very closely with mobile developers to help optimize mobile apps for speed and battery life with AT&T’s own Application Resource Optimizer. I am also in the process of finishing up a book on High Performance Android Apps that will be published later this summer. So I am always excited to see mobile application performance hit the center stage.

Last month, Google held its annual Google I/O conference, where they announce new products, tools and features. This year, with the release of the Android M developer preview, performance of mobile devices/battery life and app performance were on the center stage (and unveiled at the keynote!). Lets look at the new features and tools available to users and developers to make Android’s battery life better.

Read more…

Embracing Java for the Internet of Things

Technology executive and enthusiast Mike Milinkovich on Java's role in shaping future enterprise development.

Hardware and software are coming together in new and exciting ways. To get a better sense of this excitement, one need look no further than the nascent explosion of connected devices and technologies. But how do we best cater development for these emerging paradigms, and how do more mature languages, like Java, fit into the equation?

I spoke with Mike Milinkovich, Executive Director at the Eclipse Foundation. Mike and his team are currently leading the charge to promote open source IoT protocols, runtimes, frameworks, and SDKs across a variety of languages, including Java. Eclipse’s IoT stack for Java is already being utilized by such companies as Philips, Samsung, and eQ-3. Here, he talks about Java’s unique standing in this emerging marketplace, and the impact of the open source community on IoT development.

10 Elasticsearch metrics to watch

Track key metrics to keep Elasticsearch running smoothly.

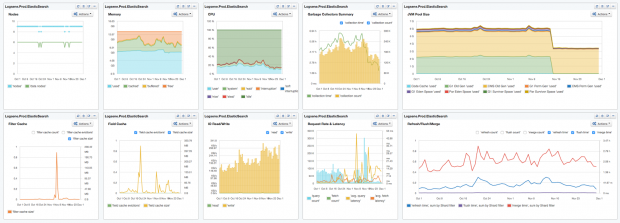

Elasticsearch is booming. Together with Logstash, a tool for collecting and processing logs, and Kibana, a tool for searching and visualizing data in Elasticsearch (aka, the “ELK” stack), adoption of Elasticsearch continues to grow by leaps and bounds. When it comes to actually using Elasticsearch, there are tons of metrics generated. Instead of taking on the formidable task of tackling all-things-metrics in one blog post, I’ll take a look at 10 Elasticsearch metrics to watch. This should be helpful to anyone new to Elasticsearch, and also to experienced users who want a quick start into performance monitoring of Elasticsearch.

Most of the charts in this piece group metrics either by displaying multiple metrics in one chart, or by organizing them into dashboards. This is done to provide context for each of the metrics we’re exploring.

To start, here’s a dashboard view of the 10 Elasticsearch metrics we’re going to discuss:

10 Elasticsearch metrics in one compact SPM dashboard. This dashboard image, and all images in this post, are from Sematext’s SPM Performance Monitoring tool.

Now, let’s dig into each of the 10 metrics one by one and see how to interpret them.

Teaching kids how to code with Minecraft

Maintaining a focus on fun and interactivity keeps students engaged and enthused while learning Java.

I consider myself extremely fortunate to be involved with Devoxx4Kids, a Not-for-Profit, 501(c)(3) registered organization in the U.S., whose goal is to deliver Science Technology Engineering Mathematics (STEM) workshops to kids at an early age around the world. We delivered over 40 workshops in the U.S. alone last year on topics ranging from Python, Scratch, and Minecraft modding to NAO robots, Raspberry Pi, Arduino, and Little Circuits. Globally, we’ve delivered over 350 workshops and connected with approximately 5,000 students, with over 30% girls. Attendees from these workshops often leave with unique and inspirational stories to share. Read more…

3 simple reasons why you need to learn Scala

How Scala will help you grow as a Java developer.

With Scala Days nearly upon us, the Fort Mason Center in San Francisco will be awash with developers excited to share ideas and explore the latest use-cases in this “best of both worlds” language. Scala has come a long way from its humble origins at the École Polytechnique Fédérale de Lausanne, but with the fusion of functional and object-oriented programming continuing to pick up steam across leading-edge enterprises and start-ups, there’s no better time than right now to stop dabbling with code snippets and begin mastering the basics. Here are three simple reasons why learning Scala will help you grow as a Java developer, as excerpted from Jason Swartz’s new book Learning Scala.

1. Your code will be better

You will be able to start using functional programming techniques to stabilize your applications and reduce issues that arise from unintended side effects. By switching from mutable data structures to immutable data structures and from regular methods to pure functions that have no effect on their environment, your code will be safer, more stable, and much easier to comprehend.

Read more…

Full-stack tensions on the Web

How much do you need to know?

I expected that CSSDevConf would be primarily a show about front-end work, focused on work in clients and specifically in browsers. I kept running into conversations, though, about the challenges of moving between the front and back end, the client and the server side. Some were from developers suddenly told that they had to become “full-stack developers” covering the whole spectrum, while others were from front-end engineers suddenly finding a flood of back-end developers tinkering with the client side of their applications. “Full-stack” isn’t always a cheerful story.

I expected that CSSDevConf would be primarily a show about front-end work, focused on work in clients and specifically in browsers. I kept running into conversations, though, about the challenges of moving between the front and back end, the client and the server side. Some were from developers suddenly told that they had to become “full-stack developers” covering the whole spectrum, while others were from front-end engineers suddenly finding a flood of back-end developers tinkering with the client side of their applications. “Full-stack” isn’t always a cheerful story.

In the early days of the Web, “full-stack” was normal. While there were certainly people who focused on running web servers or designing sites as beautiful as the technology would allow, there were lots of webmasters who knew how to design a site, write HTML, manage a server, and maybe write some CGI code for early applications.

Formal separation of concerns among HTML, CSS, and JavaScript made it easier to share responsibilities among specialists. As the dot-com boom proceeded, specialization accelerated, with dedicated designers, programmers, and sysadmins coming to the work. Perhaps there were too many titles.

Even as the bust set in, specialization remained the trend because Web projects — especially on the server side — had grown far more complicated. They weren’t just a server and a few scripts, but a complete stack, including templates, logic, and usually a database. Whether you preferred the LAMP stack, a Microsoft ASP stack, or perhaps Java servlets and JSP, the server side rapidly became its own complex arena. Intranet development in particular exploded as a way to build server-based applications that could (cheaply) connect data sources to users on multiple platforms. Writing web apps was faster and cheaper than writing desktop apps, with more tolerance for platform variation.

Read more…

Java 8 streams API and parallelism

Leveraging intermediate and terminal operators to process large collections.

In the last post in this series, we learned about functional interfaces and lambdas. In particular, we looked at the ITrade functional interface, and filtered a collection of trades to generate a specific value. Prior to the feature updates made available in Java 8, running bulky operations on collections at the same time was ineffective and often cumbersome. They were restricted from performing efficient parallel processing due to the inherent explicit iteration that was being used. There was no easy way of splitting a collection to apply a piece of functionality on individual elements that then ran on multi-core machine architectures — that is, until the addition of Java 8’s Streams API.